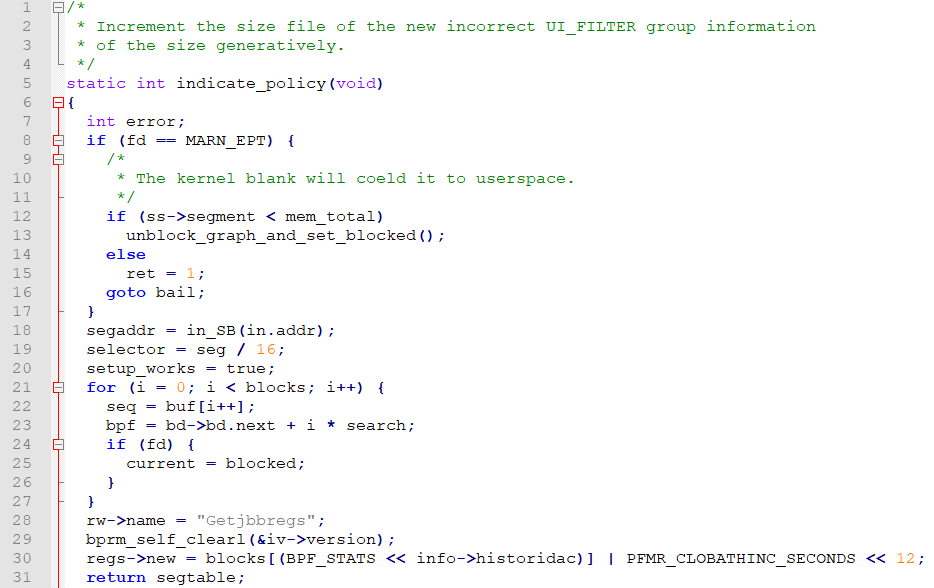

New York University researchers have previously shown that AI-based programming proposals often prove insecure in experiments under various conditions. “Additionally, participants with access to an AI assistant were more likely to believe they wrote secure code than those without access to the AI assistant.” “We found that participants with access to an AI assistant often produced more security vulnerabilities than those without access, with particularly significant results for string encryption and SQL injection,” the authors state in their work. Worse, they found that AI assistance tends to mislead developers about the quality of their code. In the article titled “Are users writing more insecure code with AI assistants?” researchers Neil Perry, Megha Srivastava, Deepak Kumar,and Dan Boneh answer this question in the affirmative. Researchers at Stanford University found that programmers who accept help from artificial intelligence tools like Github Copilot produce less secure code than those who code on their own, reports The Register.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed